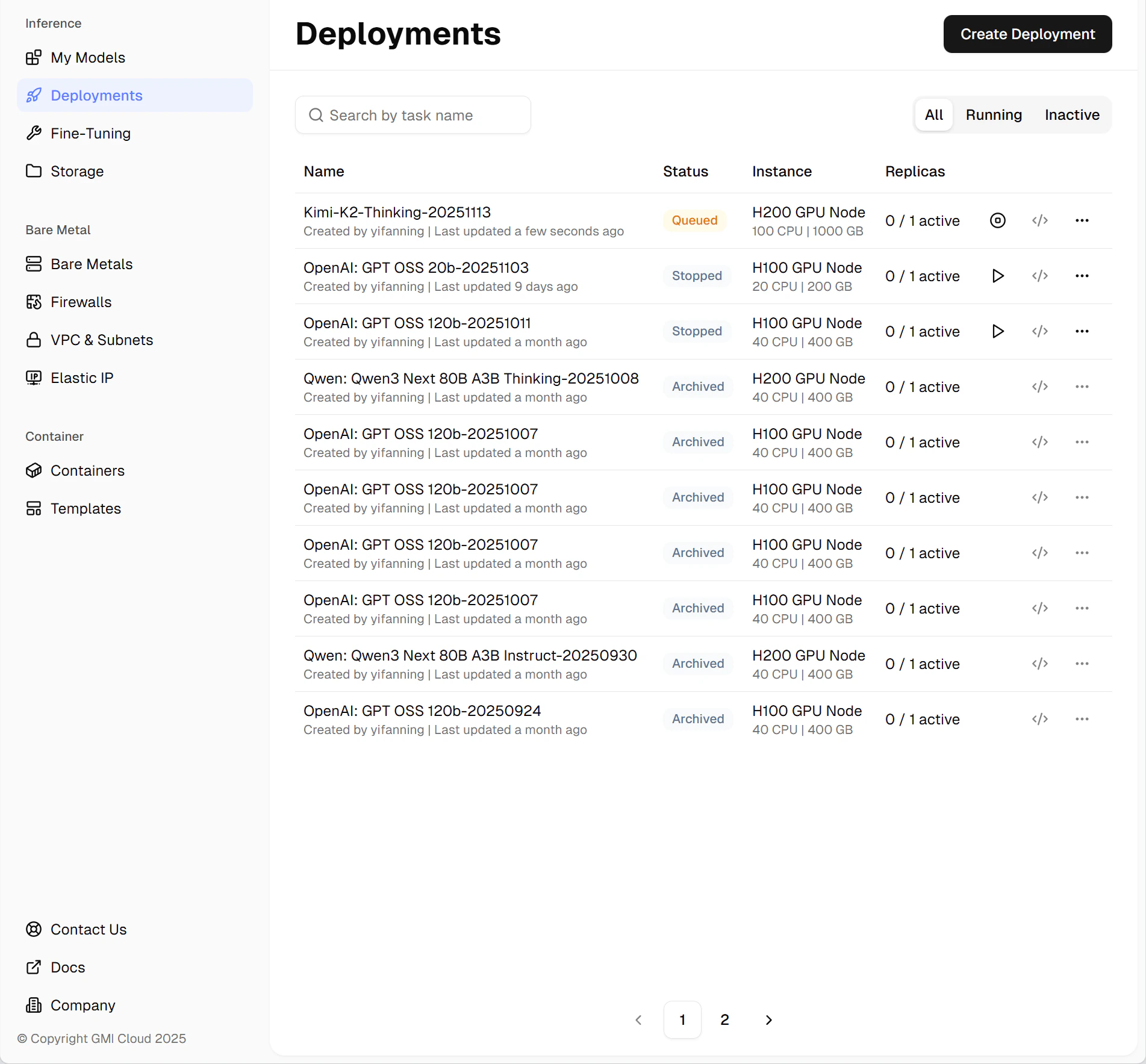

| Queued | The deployment task has been added to the queue. It will start once all higher-priority tasks have been processed. |

| Deploying | The system is allocating hardware resources and initializing the model endpoint. |

| Running | Deployment is complete, and the endpoint is active and ready for production use. |

| Stopped | The deployment has been manually stopped by the user. It can be restarted at any time. |

| Archived | The deployment has been terminated permanently. It cannot be restarted, but historical records are retained for reference. |